Automatically scored orals

Formative evaluations are one of the best ways to help students understand where they are now and what they need to do to achieve the objectives. The problem with formative oral evaluations is that students get very anxious and refuse to even try unless it counts because they don’t want the teacher to judge them harshly. Teachers are also very reluctant to give formative oral evaluations because there isn’t enough time in the semester to run every oral exam twice. Wouldn’t it be great if we could automate formative oral evaluations?

Hey! Talk to my robot before you do the summative evaluation with me.

That’s what I have been working on recently. Here’s a sneak peek at an early version of an oral evaluation plugin I am working on for Labodanglais.com. The finished version should be ready later this spring 2022.

The evaluation shown in the videos below are simple, but the system will be able to evaluate orals for every level from simple “introduce yourself” activities to “read aloud” pronunciation tasks to less regulated “persuasive oral arguments on a moral controversy.” Any speaking task that has a length requirement (word count) and required language (target structures) and required grammar accuracy (grammar check) could be evaluated with this system. Some basic training in how to find and interpret the feedback will probably be needed, also.

Introduce yourself

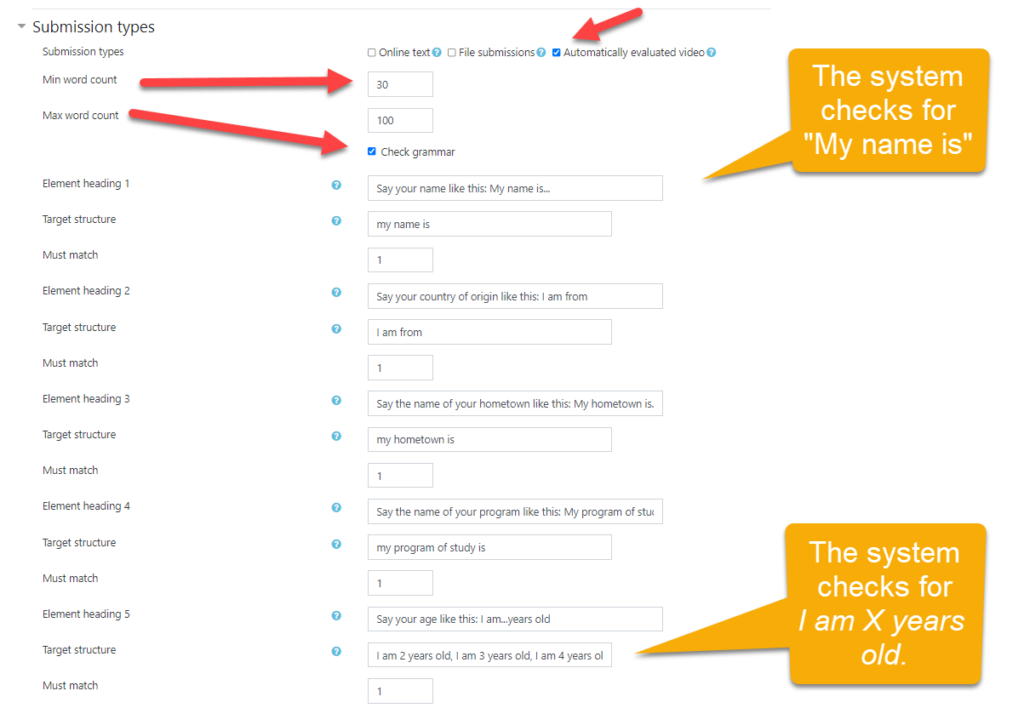

This speaking task is very simple. The student simply has to complete the structural frame with his or her own information. The system counts the words in the transcript and tries to find matches for predefined target phrases.

Here are the automatically evaluated video settings. Note how there is one phrase to match for each of the target structures except for the age, which lists, I am 2 years old, I am 3 years old, I am 4 years old, etc. In this case, I have not included other valid alternatives for “I am from,” such as “My country of origin is.” These alternatives are easy to add, but listing the full range of valid alternatives can be a challenge. In any case, the goal of this activity is to introduce beginners to “I am from.” Providing focus on specific target structures is necessary and important if you want to help students expand their linguistic repertoire of structural frames.

Currently, the system only accepts a comma-separated list. If we want to allow greater variability, we must list all possible variations. But what you see here is only an early version of the plugin–a proof of concept. Instead of listing all of the possible forms a target structure might take with an element in the middle that varies, in future versions, we could allow REGEX (regular expressions) so that we could check for phrases with variables, like this: (I am )([0-9]+)( years old). If you are not familiar with REGEX, I should explain that parentheses identify a specific group of characters while brackets identify a range of values, so [0-9] means any number from zero to nine. The + means more numbers might follow the first.

Tell the soup joke

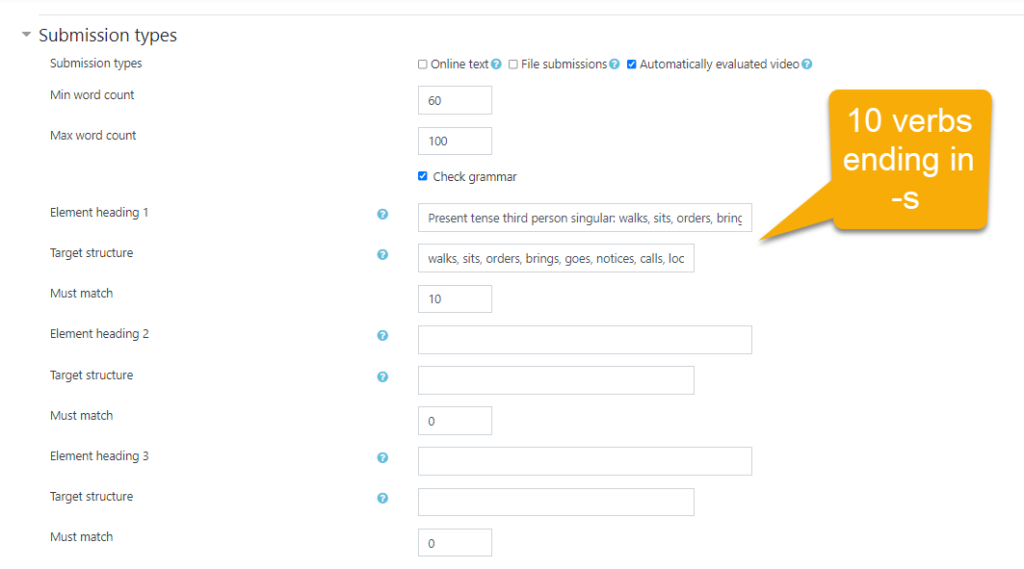

This speaking task is a kind of pronunciation test. French-speaking ESL students have difficulty pronouncing the -s at the end of verbs and plurals. The system counts the words in the transcript and tries to find matches for the list of verbs that end in -s. When students realize that they haven’t pronounced all of the 3rd person singular verbs, they might be motivated to try again and bring that aspect of their pronunciation under greater conscious control.

Here you can see the automatically evaluated video settings. Note how there is only one list of comma-separated target structures: walks, sits, orders, brings, goes, notices, calls, looks, turns, says. Jokes are carefully scripted narratives that are designed to lead the listener to create a mental model based on one kind of logic, only to replace that logic with a surprising and unexpected new logic, so getting the verbs right is important. You don’t want to confuse your listener with broken English.

In the future, we could use a part-of-speech tagger instead. POS taggers can tag each word in a text for its part of speech. The word “notices” word would be tagged with two parts of speech: a third person verb (VBZ) and a plural noun (NNS). But POS taggers can often disambiguate the part of speech for a word from the surrounding linguistic context like this, “The customer VBZ something strange in his soup.” Allowing parts of speech in the definitions of target structures would make the system much more powerful and allow it to evaluate longer, more open-ended evaluation tasks. For example, jokes tend to use third person singular verbs, so counting and rewarding the presence of VBZ tags would allow the system to score any joke of this type: a X walks into a bar… By the same token, storytelling in which the past tense is expected could look for past simple (VBD) and sequencing words (for example., next, after, when, while, finally, etc.).

Persuasive orals on a moral controversy

I ask my 102 students to record a persuasive oral introduction with their webcams to each of the 6 argument essays they write during our 15 weeks together. Grading orals is very time consuming and tedious. The introductions can be quite long, and students sometimes forget to include certain required elements. I have to listen carefully to each oral to get their score right. But with automated oral evaluations, I will only need to score their final oral instead of every one. They will get the practice they need, and I will get to enjoy my weekends.

Here’s a list of the required target elements that students must include in their talks.

Target elements

- Identity: start with a greeting

- Unity: create unity by showing that you and your listeners are the same in some way, for example, by showing support for a popular issue

- Credibility: admit to a weakness before mentioning a strength

- Authority: establish your authority by telling your listeners about your credentials and successes to build your clout

- Goal: explain your goal so that listeners know what’s coming by telling your listeners what you intend to do

- Commitment: tell you listeners what you want them to do and remind them that they are already committed in some way

- Curiosity: make your viewers curious about what’s coming

In this demonstration, you hear me talk about an added sugar tax on processed fast food. Although I manage to include most of the seven target elements, I forgot to explain the goal of my talk, sometimes called an advance organizer. The system penalized me for that. Also, I did not conjugate all of my third person verbs and I did not use enough plurals. There is a false alarm, which I have since fixed. Jump to the 3:10 mark to see the feedback.

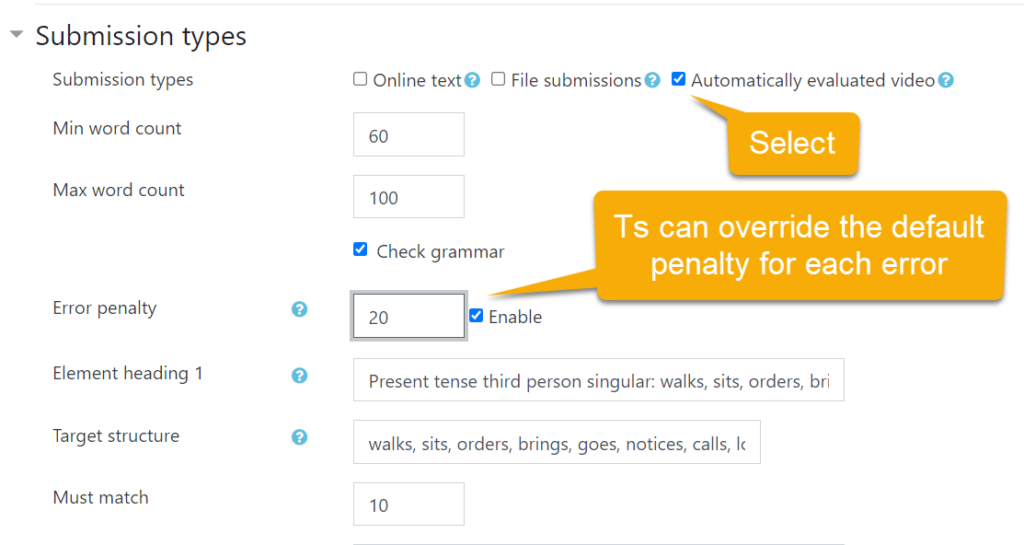

Set the grammar error penalty

Teachers can now set the error penalty or accept the default. Simply click enable and set the percentage penalty for each error.

What if the student complains?

Students sometimes feel wounded when they do an activity and don’t get every point they feel they deserve. Teachers encounter these hurt feelings every time we miscalculate a grade from elementary school to the university level. Without a doubt, I expect more than one student will complain that they said something that the robot didn’t catch. How can we handle it?

Do it again.

Maybe your microphone isn’t so good or you did the activity in a room with ambient noise. Telling students to it again in the college lab seems reasonable when there is a required lab component to the course with a well-equipped multimedia lab.

Let me listen to it.

When a lot of grading is done by the machine, teachers can switch roles to plan-b evaluators. We can tell students not to worry. We will override the score when we have a free moment.

Digital literacy is part of your education.

A small minority of students resist the use of technology in school settings. There are many ways that they can put pressure on teachers to return to old-school practices. Why do they do it? It’s so that they can stay in their comfort zone and avoid the challenges of entering the learning zone or the terror of the panic zone. Learning takes effort, and digital literacy is and essential part of literacy these days. If computers make you panic, it just means you have more to learn than the kid sitting next to you.

Students might say, “This is an English class. Why are we talking to a robot?” Okay. Try calling a utility company. You’ll be talking to a robot. Get a cellphone with Siri, Android, or an Echo Dot Alexa device. People talk to robots all day long.

Smart machines, including speech-recognition-enabled robots, can help optimize efficiency in every field. Language education is no different. Yes, smart machines need to be debugged. Self-driving cars crash sometimes. What matters in language learning is our ability to maximize the repeated exchange of meaningful messages with a focus accuracy and on target structures.

Conclusion

Good teachers provide good learning opportunities. This plugin could help provide timely and time-efficient formative evaluations. If a robot can help a student catch errors and omissions before his or her scheduled oral, the student will still have a chance to do the work to improve the oral. The impact is bound to be positive.

If the impact on student achievement turns out to be low in comparison to other interventions, we can change our course design to something that works better. I have tried self-assessment, peer-evaluations, checklists, and models before. Students still ask, “Yes, but what can I do to improve my score?” This plugin is intended to begin answering that question. Surely, adding an automated formative oral evaluation before the summative oral exam will seem promising to teachers and therefore worth the effort and expense for me.